Introduction

Traditional software architecture has historically been deterministic, modular, and human-instruction-driven. With the emergence of large language models and autonomous AI systems, software increasingly operates in environments characterized by uncertainty, probabilistic reasoning, and adaptive behavior.

In this context, agent-first architecture refers to a paradigm in which:

- AI agents act as primary actors, not auxiliary tools

- systems are designed around goals and outcomes, rather than fixed procedures

- orchestration emerges dynamically through agent interaction, not predefined workflows

This shift mirrors earlier transitions from monoliths to microservices—but introduces fundamentally different challenges, including non-determinism, explainability, and governance.

Defining AI-Native Systems

An AI-native system is one in which artificial intelligence is not a feature layer but a core organizing principle. Such systems typically exhibit:

- Probabilistic execution: Outputs are not guaranteed to be identical across runs

- Context-awareness: Decisions depend on dynamic inputs and evolving state

- Goal-oriented behavior: Tasks are framed as objectives rather than instructions

- Continuous learning or adaptation

Agent-first architectures operationalize these characteristics by encapsulating intelligence within discrete, interacting entities.

Core Components of Agent-First Architecture

Agents as First-Class Abstractions

Agents replace traditional services as the primary computational units. Each agent:

- possesses a goal or objective function

- operates with partial autonomy

- can interact with other agents and external systems

Unlike microservices, agents are not strictly deterministic; their behavior may vary depending on context and model inference.

Tooling Layer (Augmented Capabilities)

Agents rely on external tools to act in the world:

- APIs

- databases

- execution environments

This creates a hybrid architecture where deterministic tools are orchestrated by non-deterministic agents.

Memory and Context Management

Persistent and short-term memory layers enable agents to:

- retain knowledge across sessions

- build context over time

- coordinate with other agents

Memory becomes a critical infrastructure component, replacing traditional state management patterns.

Orchestration and Coordination

Instead of centralized orchestration, agent-first systems often employ:

- multi-agent coordination

- negotiation protocols

- emergent workflows

This reduces rigidity but introduces unpredictability and complexity.

Architectural Patterns

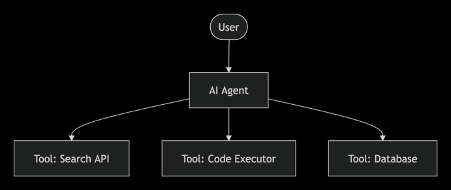

Single-Agent with Tool Augmentation

A single agent orchestrates multiple tools to complete tasks.

P1

Use case: personal assistants, developer copilots

Advantage: simplicity

Limitation: scalability bottlenecks

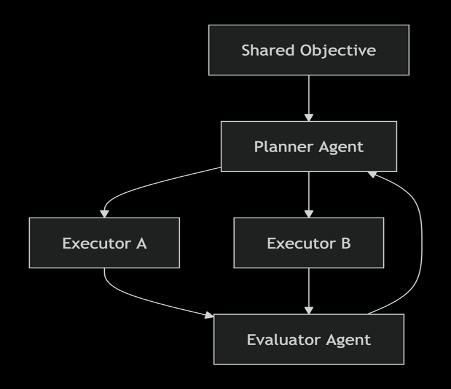

Multi-Agent Systems (MAS)

Multiple specialized agents collaborate to achieve a shared objective.

Examples of roles:

- planner agent

- executor agent

- evaluator agent

Advantage: modular intelligence and scalability

Risk: coordination overhead and emergent failure modes

P2

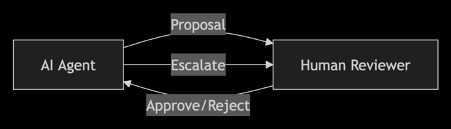

Human-in-the-Loop (HITL) Architecture

Human oversight is embedded in decision-making loops.

Functions:

- validation

- correction

- escalation

This pattern is critical in regulated domains such as law, finance, and healthcare.

P3

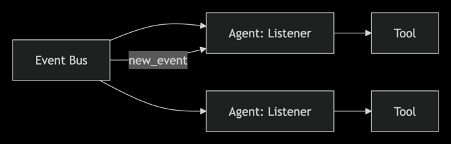

Event-Driven Agent Systems

Agents react to events rather than following linear workflows.

Characteristics:

- asynchronous execution

- reactive behavior

- loosely coupled components

P4

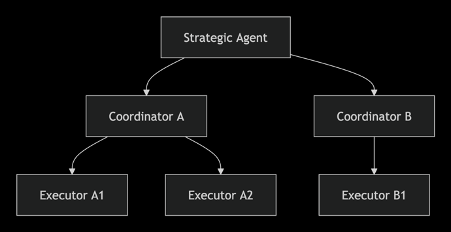

Hierarchical Agent Architectures

Agents are organized in layers:

- high-level strategic agents

- mid-level coordinators

- low-level executors

This pattern mirrors organizational structures and improves control.

P5

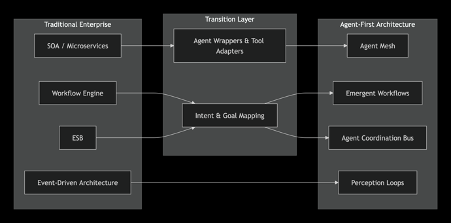

From Enterprise Patterns to Agent-First Architectures

Legacy enterprise systems rely on mature patterns that have been carefully refined over decades. As we move to agent-first design, these patterns do not disappear - they migrate and transform. Below is a mapping of key enterprise patterns and their agentic counterparts.

|

Traditional Enterprise Pattern |

Agent-First Evolution |

Key Shifts |

|

Service-Oriented Architecture (SOA) / Microservices |

Agents as dynamic services with goals, memory, and probabilistic behavior |

Fixed API contracts → goal specifications; synchronous calls → negotiation-based interactions |

|

API Gateway |

Agent Gateway / Agent Mesh |

Routing based on intent and context; agents discover each other via capability registries |

|

Enterprise Service Bus (ESB) |

Agent Coordination Bus with semantic message interpretation |

Structural transformation → semantic understanding; agents decide routing dynamically |

|

Business Process Management (BPMN) / Workflow Engines |

Emergent workflows through multi-agent collaboration |

Predefined flows → goal‑driven, dynamically composed steps; agents negotiate task allocation |

|

Event-Driven Architecture (Kafka, message queues) |

Agent perception loops |

Events become triggers for agent deliberation; agents subscribe to environment changes and proactively act |

|

Saga Pattern (distributed transactions) |

Agent-based compensation and negotiation |

Compensation logic is generated or reasoned about; agents collaboratively resolve consistency |

|

CQRS / Event Sourcing |

Agent memory and context reconstruction |

Event store → episodic memory; agents replay events to reconstruct state and learn |

|

Singleton / Coordinator |

Hierarchical or market-based agent coordination |

Hardcoded singleton → emergent leadership or elected coordinator agent |

Migration Journey Diagram

P6

This migration is not a big‑bang replacement; rather, agent‑first architectures often co‑exist with traditional services through tool layers, incremental wrapping, and gradual capability enhancement. The organizational knowledge embedded in patterns like saga, circuit breaker, or idempotency consumer becomes the guardrail logic that constrains agent behavior.

Design Principles

- Goal-Oriented Design: Systems should be designed around desired outcomes, not rigid instructions.

- Probabilistic Tolerance: Architectures must tolerate variability in outputs, partial correctness, and iterative refinement.

- Observability and Explainability: Given non-determinism, systems must provide traceability of decisions, reasoning logs, and audit trails.

- Safety and Guardrails: Agent behavior must be constrained through policy layers, validation mechanisms, and sandboxed execution.

Challenges and Risks

- Non-Determinism: Agent outputs may vary, complicating testing and debugging.

- Alignment and Control: Ensuring agents act in accordance with system goals and ethical constraints remains a central challenge.

- Security: Agents with tool access can introduce data leakage risks and unauthorized actions.

- Legal and Regulatory Implications: Novel questions about liability, intent in autonomous systems, and explainability standards are particularly relevant for regulated sectors.

Implications for Software Engineering

Agent-first architectures require rethinking traditional roles:

- Developers become system designers and orchestrators

- Testing shifts toward behavioral evaluation and simulation

- UX evolves into interaction design between humans and agents

- Documentation must evolve from static specifications to dynamic system narratives

Future Directions

Key areas for future research include:

- Standardized protocols for agent communication (e.g., Agent Communication Protocols)

- Formal verification methods for probabilistic systems

- Governance frameworks for multi-agent ecosystems

- Integration of legal compliance into architectural design

Conclusion

Agent-first architectures represent a fundamental shift in software design, aligning systems with the capabilities and limitations of modern AI. By treating agents as primary actors, these architectures enable more adaptive, scalable, and intelligent systems—but also introduce new complexities in reliability, governance, and law. The migration from established enterprise patterns to agentic forms provides a pragmatic path forward, allowing organizations to leverage existing architectural wisdom while embracing the transformative potential of AI‑native design.

Source code:

P1

graph TD

User([User]) --> Agent[AI Agent]

Agent --> Tool1[Tool: Search API]

Agent --> Tool2[Tool: Code Executor]

Agent --> Tool3[Tool: Database]

P2

graph TD

Task[Shared Objective] --> Planner[Planner Agent]

Planner --> Executor1[Executor A]

Planner --> Executor2[Executor B]

Executor1 --> Evaluator[Evaluator Agent]

Executor2 --> Evaluator

Evaluator --> Planner

P3

graph LR

Agent[AI Agent] -- Proposal --> Human[Human Reviewer]

Human -- Approve/Reject --> Agent

Agent -- Escalate --> Human

P4

graph LR

EventBus[Event Bus] --> AgentA[Agent: Listener]

EventBus --> AgentB[Agent: Listener]

AgentA --> Tool[Tool]

AgentB --> Tool2[Tool]

EventBus -- new_event --> AgentA

P5

graph TD

Strategic[Strategic Agent] --> Coord1[Coordinator A]

Strategic --> Coord2[Coordinator B]

Coord1 --> Exec1[Executor A1]

Coord1 --> Exec2[Executor A2]

Coord2 --> Exec3[Executor B1]

P6

graph LR

subgraph Traditional [Traditional Enterprise]

SOA[SOA / Microservices]

BPM[Workflow Engine]

ESB[ESB]

EDA[Event-Driven Architecture]

end

subgraph Transition [Transition Layer]

Proxy[Agent Wrappers & Tool Adapters]

Semantics[Intent & Goal Mapping]

end

subgraph Agentic [Agent-First Architecture]

AgentMesh[Agent Mesh]

EmergFlow[Emergent Workflows]

CoordBus[Agent Coordination Bus]

Perception[Perception Loops]

end

SOA --> Proxy --> AgentMesh

BPM --> Semantics --> EmergFlow

ESB --> Semantics --> CoordBus

EDA --> Perception

Список литературы

- Brown, T. B. et al. Language Models are Few-Shot Learners // Advances in Neural Information Processing Systems. — 2020. — Vol. 33. — P. 1877–1901

- Park, J. S. et al. Generative Agents: Interactive Simulacra of Human Behavior // Proceedings of the 36th Annual ACM Symposium on User Interface Software and Technology. — 2023. — P. 1–22. (Ключевая работа по архитектуре агентов на базе LLM)

- Xi, Z. et al. The Rise and Potential of Large Language Model Based Agents: A Survey // arXiv preprint arXiv:2309.07864. — 2023

- Wang, L. et al. A Survey on Large Language Model based Autonomous Agents // Frontiers of Computer Science. — 2024. — Vol. 18, No. 6

- Shinn, N. et al. Reflexion: Language Agents with Verbal Reinforcement Learning // arXiv preprint arXiv:2303.11366. — 2023. (Относится к паттернам самокоррекции и оценки)

- Talebirad, Y., Nadiri, A. Multi-Agent Collaboration: Harnessing the Power of Intelligent LLM Agents // arXiv preprint arXiv:2306.03337. — 2023

- Richards, M., Ford, N. Fundamentals of Software Architecture: An Engineering Approach. — O'Reilly Media, 2020. — 432 p.

- Wu, Q. et al. AutoGen: Enabling Next-Gen LLM Applications via Multi-Agent Conversation Framework // arXiv preprint arXiv:2308.08155. — 2023. (Практическая реализация паттернов координации агентов)

- Cortes-Cornax, M. et al. From Services to Agents: A Paradigm Shift in Software Engineering // International Journal of Software Engineering and Knowledge Engineering. — 2022. — Vol. 32

- Amodei, D. et al. Concrete Problems in AI Safety // arXiv preprint arXiv:1606.06565. — 2016. (Для раздела о надежности и управлении)

- White, J. et al. A Prompt Pattern Catalog to Enhance Prompt Engineering with ChatGPT // arXiv preprint arXiv:2302.11390. — 2023